When it comes to measuring and improving patient experiences, success comes from measuring all the right things – all those aspects of experiences that truly matter to patients.

If you measure the wrong things, no matter how much you improve them, your patients won’t rate you higher, recommend you to others, or feel that their care has improved.

And if you only measure some of the right things, you’ll end up with surveys that tell an incomplete story and that will lessen the impact of your quality improvement efforts.

Why the Right Domains Matter

In Patient-Reported Experience Measures, (PREMs), “the things” we measure are domains of care – trust, communication, coordination, discharge experience, and more.

When key domains are missing, it’s easy to end up with glowing scores for specific areas of care but disappointing overall ratings, like “Overall, how would you rate the quality of your care?” or “How likely are you to recommend us to friends and family?” (NPS).

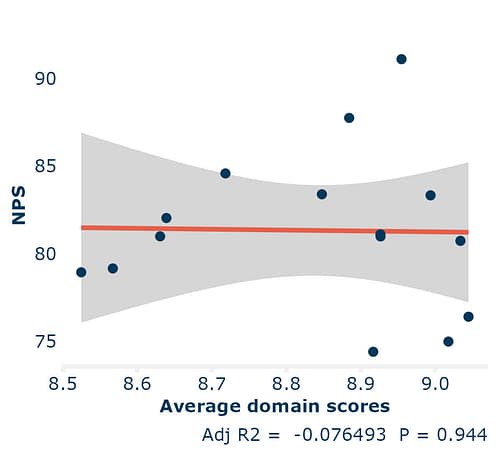

The image above, is an example of a survey that missed out questions on many domains that impact patient experience (such as discharge experience and confidence and trust in staff). Notice how there is no correlation between the strong average domain scores (the things they did ask about) and NPS.

(The line having no relationship to the facility level dots means no correlation, if the dots are close to the line, it means a strong correlation.)

That gap, strong domain scores but poor overall ratings, is a red flag. It potentially means you’re missing important things that matter to patients.

How We Know When Domains Are Missing

At Cemplicity, we’ve run PREMs surveys for clients across the world, from small clinics to large national systems, and we’ve learned how to spot when something’s off.

We know questions are missing when:

Experience tells us so. Our work with partners like Picker Institute Europe and the Beryl Institute helps us recognise which domains truly shape patient experience.

Statistics reveal it. When overall ratings like NPS are less correlated than expected, it signals that key domains may be missing.

Patients tell us directly. Open-ended comments often highlight issues the survey doesn’t cover. They are a critical element of good PREMs surveys – allowing patients to tell their stories and tell us about important things that the survey questions haven’t surfaced.

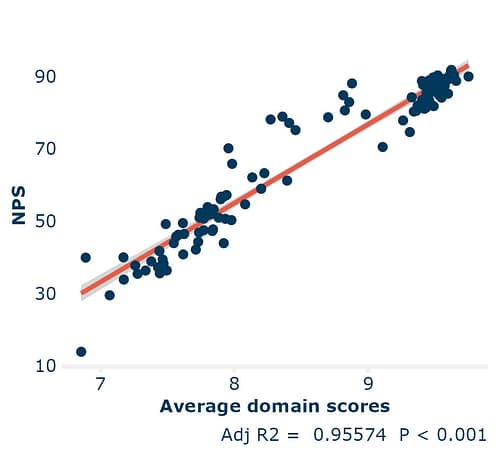

When all the right domains are included, domain scores and overall ratings move together. That’s how you know you’re measuring what matters.

In the image above we use data from 111 facilities across three continents and multiple surveys, where the questions cover all key domains of care, and the average of the domain scores is closely correlated with NPS.

The adjusted R-squared value (Adj R2) tells us the degree of correlation; 1 is perfect correlation and 0 is no correlation at all.

NPS Still Works – Even in Healthcare

We often hear people question the relevance of NPS in systems where patients can’t choose their provider. In addition, frontline teams tell us that NPS scoring is not intuitive asking us why 7 & 8s out of 10 are excluded from results.

These are fair questions, but our data shows that NPS remains an accurate measure of health experience and quality. With this assurance and considering how useful NPS is as a benchmarking metric, we continue to strongly recommend its use to clients.

The relationship between NPS and Quality of Care questions

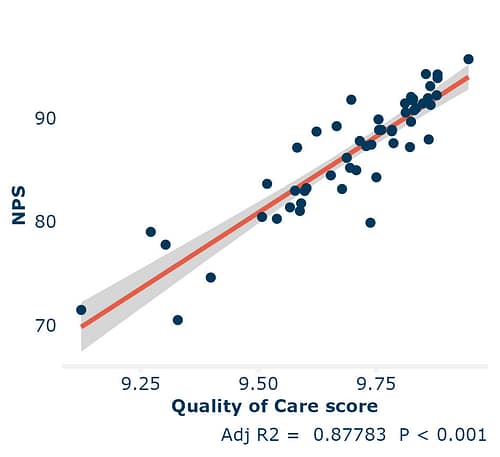

Across our surveys, we see a tight correlation between NPS and overall Quality of Care ratings. Even when we switch out NPS for “Quality of Care” in Key Driver Analysis, the same themes emerge as the biggest influences on patient experiences.

In the image above we present data taken from 53 facilities across two regions where their surveys ask both likelihood to recommend (NPS), and how patients rate the overall quality of care they received.

So, while you could use either measure, NPS gives you something extra – the ability to benchmark across providers, systems, and even industries.

NPS connects healthcare experience to the broader world of service excellence.

Relevance Beats Brevity

Despite all the evidence, one myth keeps resurfacing: shorter surveys drive higher response rates. In healthcare, that’s just not true. Our data shows that survey length doesn’t hurt response rates.

Above, we have also shown how dropping questions and leaving gaps by not covering key domains of care can undermine all your efforts to improve NPS or overall care quality.

Having said survey length doesn’t impact response rates, we need to acknowledge that relevance does hurt. It’s important to make sure that each patient’s pathway through your survey only asks them about elements of care that they experienced. E.g. you wouldn’t ask day stay patients about the full meal service or ask questions about allied health services if you know the patient didn’t receive any.

At Cemplicity, we design PREMs across the entire patient journey, from admission to recovery. Sometimes that means a single-question check-in. But for core post-discharge PREMs, a richer, well-structured survey is essential.

Because when you ask the right questions you can improve patients’ experiences, not just measure them.

The Bottom Line

A great PREMs survey doesn’t just collect data – it captures what matters.

When your questions reflect the full patient journey, from trust and communication to discharge and recovery, you’ll see alignment between domain scores and overall ratings. That’s when your insights start driving real improvement.

So, before you trim another question or drop another domain, ask yourself: Are we measuring everything that really matters to our patients?

Share this blog!

Read more related blogs